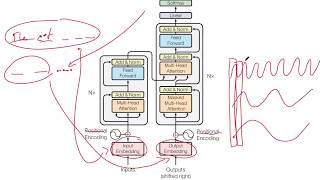

Pytorch Transformers from Scratch (Attention is all you need)

In this video we read the original transformer paper "Attention is all you need" and implement it from scratch!

Attention is all you need paper:

https://arxiv.org/abs/1706.03762

A good blogpost on Transformers:

http://www.peterbloem.nl/blog/transfo...

❤ Support the channel ❤

/ @aladdinpersson

Paid Courses I recommend for learning (affiliate links, no extra cost for you):

⭐ Machine Learning Specialization https://bit.ly/3hjTBBt

⭐ Deep Learning Specialization https://bit.ly/3YcUkoI

MLOps Specialization http://bit.ly/3wibaWy

GAN Specialization https://bit.ly/3FmnZDl

NLP Specialization http://bit.ly/3GXoQuP

✨ Free Resources that are great:

NLP: https://web.stanford.edu/class/cs224n/

CV: http://cs231n.stanford.edu/

Deployment: https://fullstackdeeplearning.com/

FastAI: https://www.fast.ai/

My Deep Learning Setup and Recording Setup:

https://www.amazon.com/shop/aladdinpe...

GitHub Repository:

https://github.com/aladdinpersson/Mac...

✅ OneTime Donations:

Paypal: https://bit.ly/3buoRYH

▶ You Can Connect with me on:

Twitter / aladdinpersson

LinkedIn / aladdinperssona95384153

Github https://github.com/aladdinpersson

OUTLINE:

0:00 Introduction

0:54 Paper Review

11:20 Attention Mechanism

27:00 TransformerBlock

32:18 Encoder

38:20 DecoderBlock

42:00 Decoder

46:55 Putting it togethor to form The Transformer

52:45 A Small Example

54:25 Fixing Errors

56:44 Ending