Monte Carlo And Off-Policy Methods | Reinforcement Learning Part 3

The machine learning consultancy: https://truetheta.io

Want to work together? See here: https://truetheta.io/about/#wanttow...

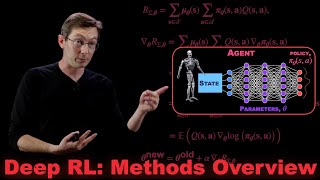

Part three of a six part series on Reinforcement Learning. It covers the Monte Carlo approach a Markov Decision Process with mere samples. At the end, we touch on offpolicy methods, which enable RL when the data was generate with a different agent.

SOCIAL MEDIA

LinkedIn : / djrich90b91753

Twitter : / duanejrich

Github: https://github.com/Duane321

Enjoy learning this way? Want me to make more videos? Consider supporting me on Patreon: / mutualinformation

SOURCES

[1] R. Sutton and A. Barto. Reinforcement learning: An Introduction (2nd Ed). MIT Press, 2018.

[2] H. Hasselt, et al. RL Lecture Series, Deepmind and UCL, 2021, • DeepMind x UCL | Deep Learning Lectur...

SOURCE NOTES

The video covers topics from chapters 5 and 7 from [1]. The whole series teaches from [1]. [2] has been a useful secondary resource.

TIMESTAMP

0:00 What We'll Learn

0:33 Review of Previous Topics

2:50 Monte Carlo Methods

3:35 ModelFree vs ModelBased Methods

4:59 Monte Carlo Evaluation

9:30 MC Evaluation Example

11:48 MC Control

13:01 The ExplorationExploitation TradeOff

15:01 The Rules of Blackjack and its MDP

16:55 Constantalpha MC Applied to Blackjack

21:55 OffPolicy Methods

24:32 OffPolicy Blackjack

26:43 Watch the next video!

NOTES

Link to Constantalpha MC applied to Blackjack: https://github.com/Duane321/mutual_in...

The OffPolicy method you see at 25:00 is different from the rule you'll see in the textbook at eq 7.9 (which will be MC if n goes to inf). That's because they are showing reweighted IS and I'm showing plain ( high variance) IS.